Member-only story

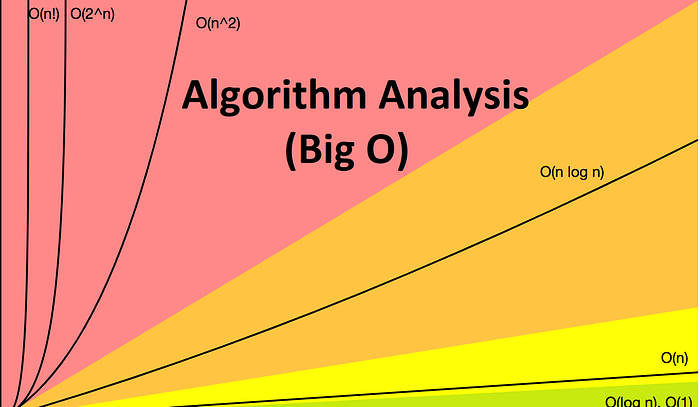

Big O Algorithm Analysis

Big O Algorithm Analysis Using Code Snippets

In computer science, algorithm analysis determines the amount of resources (such as time and/or storage) necessary to execute that algorithm or program. Usually, the running time or efficiency of an algorithm is represented as a function relating the input size to the number of steps (time complexity) or storage locations (space complexity).

An important part of computational complexity theory is algorithm analysis. Analysis of algorithms gives us theoretical estimates for the resources that are needed by an algorithm to solve a problem. With these estimates we gain insight into efficient algorithms like sorting and searching.

Big O notation, Big-omega notation and Big-theta notation are asymptotic used in the theoretical analysis of algorithms, which is used to estimate the complexity of an algorithm for some arbitrarily large input size.

Let us take a look at some common running times and source code snippets below.

O(1)

The statement takes some constant amount of time so it is O(1)

int y= n + 25;

O(n)

If the for loop takes n times to complete then it is O(n)

for(int i=0;i<n;i++){sum++;}

O(n²)

If the nested loops contain sizes n and n, the cost is O(n*n) or O(n²)

for(int i=0;i<n;i++)

for(int j=0;j<n;j++)

sum++;O(log n)

If the for loop takes n time and i increases or decreases by a multiple, the cost is O(log(n))

for(int i=0;i<n; i*=2)

sum++;